bw2calc.lca#

Module Contents#

Classes#

Base class for single and multi LCA classes |

Attributes#

- class bw2calc.lca.LCA(demand: dict, method: tuple | None = None, weighting: str | None = None, normalization: str | None = None, data_objs: Iterable[pathlib.Path | fs.base.FS | bw_processing.DatapackageBase] | None = None, remapping_dicts: Iterable[dict] | None = None, log_config: dict | None = None, seed_override: int | None = None, use_arrays: bool | None = False, use_distributions: bool | None = False, selective_use: dict | None = False)[source]#

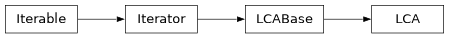

Bases:

bw2calc.lca_base.LCABase

Base class for single and multi LCA classes

Create a new LCA calculation object.

Compatible with Brightway2 and 2.5 semantics. Can be static, stochastic, or iterative (scenario-based), depending on the

data_objsinput data..This class supports both stochastic and static LCA, and can use a variety of ways to describe uncertainty. The input flags use_arrays and use_distributions control some of this stochastic behaviour. See the [documentation for matrix_utils](brightway-lca/matrix_utils) for more information on the technical implementation.

Parameters#

- demanddict[object: float]

The demand for which the LCA will be calculated. The keys can be Brightway Node instances, (database, code) tuples, or integer ids.

- methodtuple

Tuple defining the LCIA method, such as (‘foo’, ‘bar’). Only needed if not passing data_objs.

- weightingtuple

Tuple defining the LCIA weighting, such as (‘foo’, ‘bar’). Only needed if not passing data_objs.

- weightingstring

String defining the LCIA normalization, such as ‘foo’. Only needed if not passing data_objs.

- data_objslist[bw_processing.Datapackage]

List of bw_processing.Datapackage objects. Can be loaded via bw2data.prepare_lca_inputs or constructed manually. Should include data for all needed matrices.

- remapping_dictsdict[str, dict]

Dict of remapping dictionaries that link Brightway Node ids to (database, code) tuples. remapping_dicts can provide such remapping for any of activity, product, biosphere.

- log_configdict

Optional arguments to pass to logging. Not yet implemented.

- seed_overrideint

RNG seed to use in place of Datapackage seed, if any.

- use_arraysbool

Use arrays instead of vectors from the given data_objs

- use_distributionsbool

Use probability distributions from the given data_objs

- selective_usedict[str, dict]

Dictionary that gives more control on whether use_arrays or use_distributions should be used. Has the form {matrix_label: {“use_arrays”|”use_distributions”: bool}. Standard matrix labels are technosphere_matrix, biosphere_matrix, and characterization_matrix.

- property score: float[source]#

The LCIA score as a

float.Note that this is a property, so it is

foo.lca, notfoo.score()

- matrix_labels = ['technosphere_mm', 'biosphere_mm', 'characterization_mm', 'normalization_mm', 'weighting_mm'][source]#

- _switch(obj: tuple | Iterable[fs.base.FS | bw_processing.DatapackageBase], label: str, matrix: str, func: Callable) None[source]#

Switch a method, weighting, or normalization

- build_demand_array(demand: dict | None = None) None[source]#

Turn the demand dictionary into a NumPy array of correct size.

- Args:

demand (dict, optional): Demand dictionary. Optional, defaults to

self.demand.

- Returns:

A 1-dimensional NumPy array

- lci_calculation() None[source]#

The actual LCI calculation.

Separated from

lcito be reusable in cases where the matrices are already built, e.g.redo_lciand Monte Carlo classes.

- lcia_calculation() None[source]#

The actual LCIA calculation.

Separated from

lciato be reusable in cases where the matrices are already built, e.g.redo_lciaand Monte Carlo classes.

- load_lcia_data(data_objs: Iterable[fs.base.FS | bw_processing.DatapackageBase] | None = None) None[source]#

Load data and create characterization matrix.

This method will filter out regionalized characterization factors.

- load_normalization_data(data_objs: Iterable[fs.base.FS | bw_processing.DatapackageBase] | None = None) None[source]#

Load normalization data.

- load_weighting_data(data_objs: Iterable[fs.base.FS | bw_processing.DatapackageBase] | None = None) None[source]#

Load normalization data.

- normalization_calculation() None[source]#

The actual normalization calculation.

Creates

self.normalized_inventory.

- switch_method(method=Union[tuple, Iterable[Union[FS, bwp.DatapackageBase]]]) None[source]#

Load a new method and replace

.characterization_mmand.characterization_matrix.Does not do any new calculations or change

.characterized_inventory.

- switch_normalization(normalization=Union[tuple, Iterable[Union[FS, bwp.DatapackageBase]]]) None[source]#

Load a new normalization and replace

.normalization_mmand.normalization_matrix.Does not do any new calculations or change

.normalized_inventory.

- switch_weighting(weighting=Union[tuple, Iterable[Union[FS, bwp.DatapackageBase]]]) None[source]#

Load a new weighting and replace

.weighting_mmand.weighting_matrix.Does not do any new calculations or change

.weighted_inventory.

- to_dataframe(matrix_label: str = 'characterized_inventory', row_dict: dict | None = None, col_dict: dict | None = None, annotate: bool = True, cutoff: numbers.Number = 200, cutoff_mode: str = 'number') pandas.DataFrame[source]#

Return all nonzero elements of the given matrix as a Pandas dataframe.

The LCA class instance must have the matrix

matrix_labelalready; common labels are:characterized_inventory

inventory

technosphere_matrix

biosphere_matrix

characterization_matrix

For these common matrices, we already have

row_dictandcol_dictwhich link row and column indices to database ids. For other matrices, or if you have a custom mapping dictionary, overriderow_dictand/orcol_dict. They have the form{matrix index: identifier}.If

bw2datais installed, this function will try to look up metadata on the row and column objects. To turn this off, setannotatetoFalse.Instead of returning all possible values, you can apply a cutoff. This cutoff can be specified in two ways, controlled by

cutoff_mode, which should be eitherfractionornumber.If

cutoff_modeisnumber(the default), thencutoffis the number of rows in the DataFrame. Data values are first sorted by their absolute value, and then the largestcutoffare taken.If

cutoff_modeisfraction, then only values whose absolute value is greater thancutoff * total_scoreare taken.cutoffmust be between 0 and 1.The returned DataFrame will have the following columns:

amount

col_index

row_index

If row or columns dictionaries are available, the following columns are added:

col_id

row_id

If

bw2datais available, then the following columns are added:col_code

col_database

col_location

col_name

col_reference_product

col_type

col_unit

row_categories

row_code

row_database

row_location

row_name

row_type

row_unit

source_product

Returns a pandas

DataFrame.